How to scrape Google Maps data using Python

Millions of people use Google Maps daily, leaving behind a goldmine of data just waiting to be analyzed. In this guide, I'll show you how to build a reliable scraper using Crawlee and Python to extract locations, ratings, and reviews from Google Maps, all while handling its dynamic content challenges.

One of our community members wrote this blog as a contribution to the Crawlee Blog. If you would like to contribute blogs like these to Crawlee Blog, please reach out to us on our discord channel.

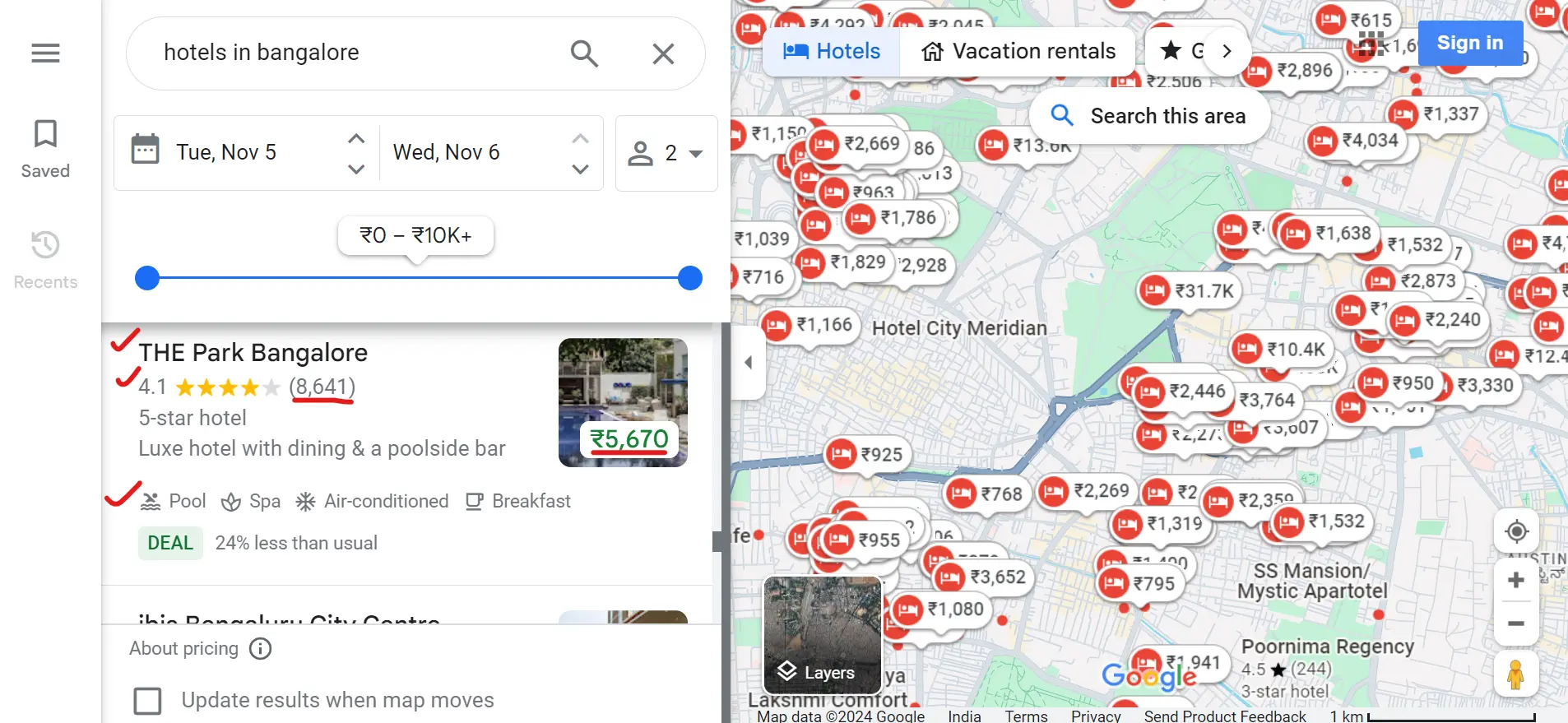

What data will we extract from Google Maps?

We’ll collect information about hotels in a specific city. You can also customize your search to meet your requirements. For example, you might search for "hotels near me", "5-star hotels in Bombay", or other similar queries.

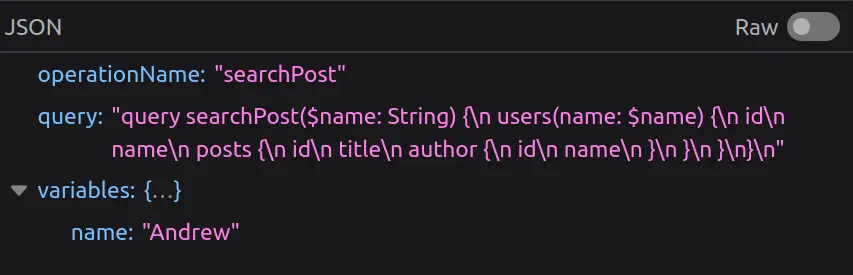

We’ll extract important data, including the hotel name, rating, review count, price, a link to the hotel page on Google Maps, and all available amenities. Here’s an example of what the extracted data will look like:

{

"name": "Vividus Hotels, Bangalore",

"rating": "4.3",

"reviews": "633",

"price": "₹3,667",

"amenities": [

"Pool available",

"Free breakfast available",

"Free Wi-Fi available",

"Free parking available"

],

"link": "https://www.google.com/maps/place/Vividus+Hotels+,+Bangalore/..."

}